January 9, 2014

by Rob Mitchum, Computation Institute

To the naked eye, the brain is pretty unimpressive: just a pink, wrinkly lump of an organ. To see the true complexity of the brain, one must find a way to observe the intricate patterns of electrical and chemical communication that underlies all of the brain’s functions. But for decades, scientists were largely limited to watching the electrical activity of one neuron at a time, akin to trying to comprehend a symphony by listening to just a single violin in the orchestra. In Nicholas Hatsopoulos’ talk for the University of Chicago Research Computing Center’s Visualizing the Life of the Mind series, he explained the recent technological advances that have allowed his laboratory to reveal more of the brain’s busy conversation.

Hatsopoulos, a professor of organismal biology and anatomy at UChicago, specifically studies the behavior of the primary motor cortex, the area of the brain responsible for moving parts of the body. In the 1930’s, neurosurgeon Wilder Penfield discovered that he could activate the muscles of anesthetized patients by stimulating different points in this area, leading him to draw up the famous motor homunculus to illustrate how body parts are represented in the brain. Subsequent research found, unsurprisingly, that the borders within the motor cortex wasn’t quite so discrete as the homunculus suggested, Hatsopoulos said, but still suggested the existence of a rough map of the body in the brain.

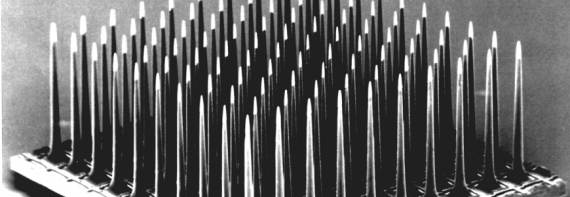

But a brain doesn’t work like a piano, where activating one neuron automatically contracts one muscle somewhere in the body. The vast range of motions possible for a body require control by a complicated neural code, one that can’t possibly be deduced from the activity of a single neuron. So Hatsopoulos’ laboratory turned to ensemble recording using a microscopic panel of 100 electrodes squeezed into a 4mm square space. Instead of one neuron at a time, the panel could record from over 100 neurons simultaneously, giving the researchers a broader view of the neural activity happening in that small patch of the brain.

“It’s a window into looking at large-scale spatiotemporal patterns almost impossible to see with single neurons, ” Hatsopoulos said. “Neurons are not sitting in isolation, they’re in a network, so we need to study these large-scale patterns.”

Experiments using the electrode panel revealed both spatial and temporal patterns while subjects performed simple motor tasks, such as moving a computer cursor or reaching for an object. Hatsopoulos’ team observed propagations of a particular wave pattern, called local field potentials, across the region of the motor cortex responsible for the motion of the arm and hand during the task. Despite the seemingly chaotic activity, these waves tended to move along a consistent axis -- first flowing one way when a new target is presented to the subject, then reversing direction.

But the naked eye isn’t enough to truly understand the relationship between these neural firing patterns and motor activity. To truly crack the code required advanced computation, work performed on UChicago’s biomedicine-dedicated Beagle supercomputer by Taka Takahashi, a post-doc in Hatsopoulos’ lab. Takahashi used statistical methods to see if the activity of the recorded neurons could be used to reveal how the brain is organized and structured.

“If it’s cloudy and then it rains in the future, you can infer a causal relationship if you see it enough times,” Hatsopoulos said. “[Similarly,] if you can predict the activity of a neuron based on past activity and the response of other neurons, there is evidence of sequential patterning and functional connections between the two neurons.”

Amidst the “tangle” of neurons, the researchers found directional connections that mirrored the propagation of waves in their experiments and aligned with anatomical findings as well. Ongoing experiments are testing theories about the functional role of these directional waves, including one that proposes that the wave works similarly to a bar code scanner at a grocery checkout. As the wave travels across a section of brain, it makes the neurons in different regions more likely to fire, shaping frenetic brain activity into organized patterns.

As scientists improve their understanding of how these activity patterns drive the movement of limbs and digits, a little piece of science fiction comes closer to reality. Early versions of brain-controlled prosthetics, which translate the neural activity of paraplegics into the motion of a mechanical arm, have already been tested in clinical trials and achieved some promising success. But further extracting the specific instructions from the brain’s complex electrical storm through watching and analyzing more than just one neuron at a time will help engineers program these brain-machine interfaces to function even more like the real thing.