February 18, 2020

Where AI Meets The Outside World

While there is plenty of promise in AI for science, the early returns on using AI to improve society have been very mixed. As algorithms begin to drive important decisions in processes such as loan approval, hiring, bail, and the dispersal of social services, new ethics, legal, and bias questions arise. What policies and practices will be necessary to ensure that AI produces more benefits than harm in these life-changing areas?

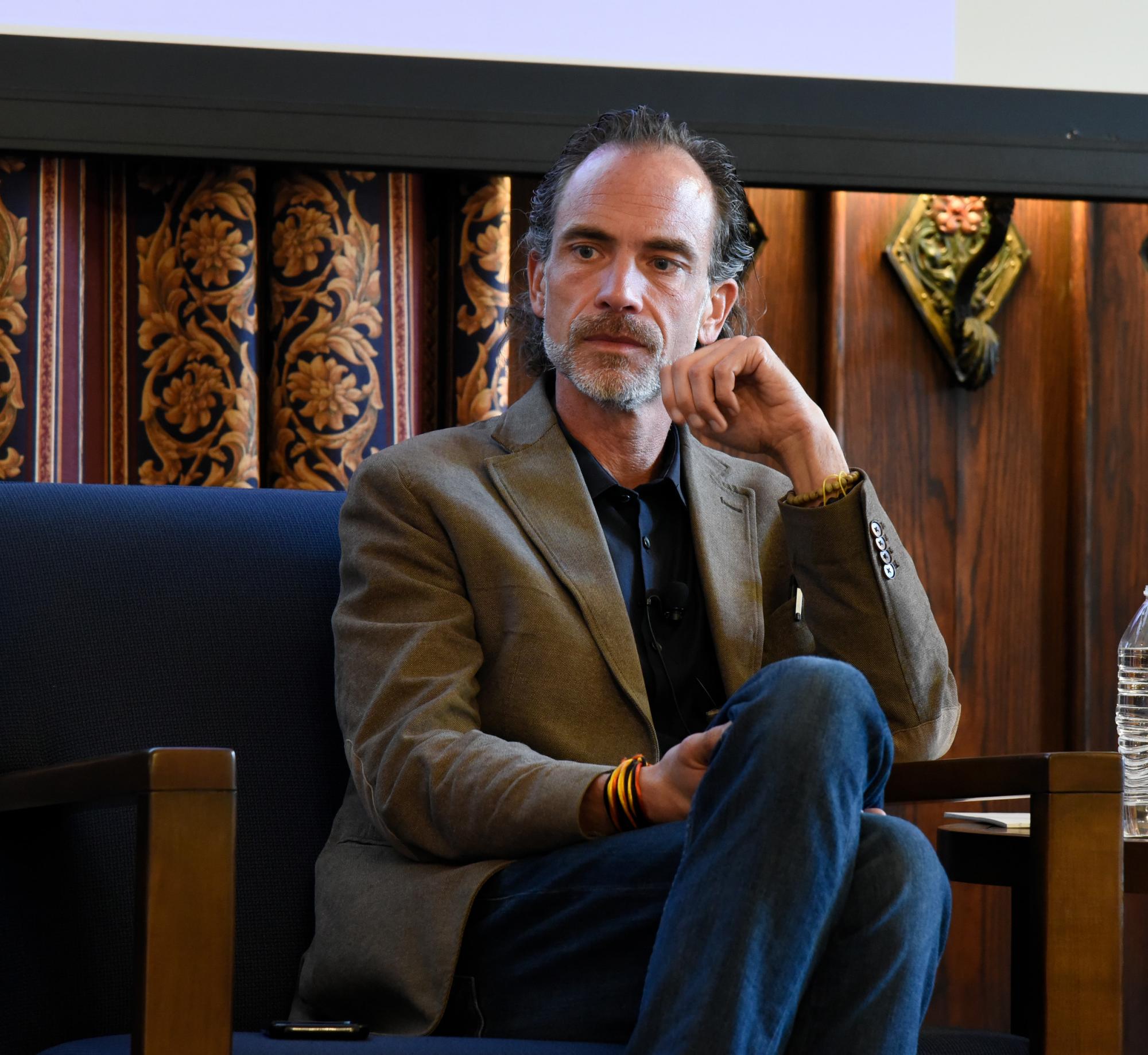

A panel moderated by Crain’s Chicago Business journalist John Pletz convened experts from multiple fields to address this very timely question. Alongside Rick Stevens, the panel included Katherine Strandburg, the Alfred Engelberg Professor of Law and Faculty Director of the Information Law Institute at New York University, and Eric Rice, the Associate Director and Founding Co-Director of the Center for Artificial Intelligence in Society at the University of Southern California.

While none of the panelists were alarmist about the dangers of AI misuse to make policy or financial decisions — in a rarity for an AI discussion, nobody brought up The Terminator — all voiced caution about current flaws in algorithmic approaches that could produce serious consequences. Oft-reported concerns about how biased data can corrupt supposedly impartial algorithms are magnified for sensitive applications such as alleviating homelessness, where data can be both scarce and unreliable.

Rice, who has worked on such systems with Los Angeles nonprofits and government agencies, said that overburdened social workers have trouble collecting and managing data, and data collected in research studies may not translate accurately to real-world usage.

“The ethics is very, very complicated, and sometimes it’s a computational problem, but sometimes it’s actually a human-systems relational problem, which is frustrating if you want to just model your way out of the problem,” Rice said. “But I think it's important to understand when you're modeling with data that's been generated in these biased ways that you inherently are going to have biased results.”

One solution could be passive data collection from technologies such as social media and wearables, but Strandburg pointed out the privacy issues raised by that form of data gathering. Strandburg also expressed her worry about “slippage” in the application of data-driven decision-making tools, where algorithms developed for one kind of prediction are misapplied to a different setting.

“It’s not just a question of having a lot of data, but it's a question of what data do you have,” Strandburg said. "There's a tradeoff where if you go grab whatever data you can find, it's not going to be representative, and not going to have the things you want. You have to make this decision about what you’re trying to predict, and very often there’s a tradeoff between what data you have and what you actually want to know."

Another obstacle to the ethical use of AI decision-making is the lack of transparency in many algorithms, either due to “black box” machine learning methods or proprietary software that doesn’t reveal its inner workings. In order for proper regulatory oversight to occur — and for the people affected by these decisions to feel that these systems are fair — it’s important that the algorithms and some form of the data used in policy decisions are made public. With this information available, Stevens is optimistic that a robust community of checks and balances would form to police these approaches.

“I think there will be a self-correction, as society becomes more and more aware of how machine learning and AI systems work; that they're not magic, they need the data, and the data has to be representative and balanced and quality,” Stevens said. “In the long term it will correct, but in the short term we’re going to have these weird imbalances where policy is way behind practice, because there's no high-level guidance."

From broader society down to academic campuses, creating ethical applications of AI will also require education and interdisciplinary communication, the panelists agreed.

“I think one of the great strengths of the group that I'm a part of is, we have both social work and computer science students and faculty, and we ask each other the fundamental questions, the hard questions, that maybe in your own disciplinary silos you start to take for granted,” Rice said.